Testing a traditional piece of software is one thing. You give it input X, you expect output Y. But testing an agentic AI system? That's a whole different ball game. You're not just testing code; you're testing a system that learns, decides, and acts on its own. It might write an email, analyze a financial report, or even negotiate a deal without a human pressing "go" for each step. The old playbooks don't cut it. I've seen too many teams deploy what they thought was a solid AI agent, only to watch it make a bizarre, costly decision in the real world because they tested it like a simple API. This guide is about building a testing strategy that matches the autonomy and complexity of agentic AI.

What's Inside This Guide

What Makes Testing Agentic AI Fundamentally Different?

Let's get this straight. If your AI just classifies an image or generates a paragraph of text, you're in familiar territory. Agentic AI crosses a line. According to research from places like Stanford's Institute for Human-Centered AI (HAI), an agentic system is characterized by its ability to pursue complex goals through self-directed sequences of actions, often using tools and adapting to feedback.

That autonomy is the game-changer for testing.

Think about the variables. A simple model has inputs and outputs. An agent has a state, a memory of past actions, access to external tools (like a browser, an API, a calculator), and operates within a dynamic environment. Your test isn't checking a single answer; it's auditing a chain of reasoning and action.

I once consulted for a team building an agent to help with research. Their unit tests passed—it could fetch papers and summarize them. But in a live test, when asked for "recent breakthroughs in battery tech," it got stuck in a loop, repeatedly searching the same query because it didn't know how to interpret "recent" as "last 6 months" and refine its search. The functional output (a search) was correct, but the process was flawed. That's the nuance.

A Practical Framework for Testing AI Agents

Throwing random prompts at your agent and hoping for the best isn't a strategy. You need layers. Here's a framework I've hammered out over several projects that actually works.

Layer 1: Unit Testing the Components

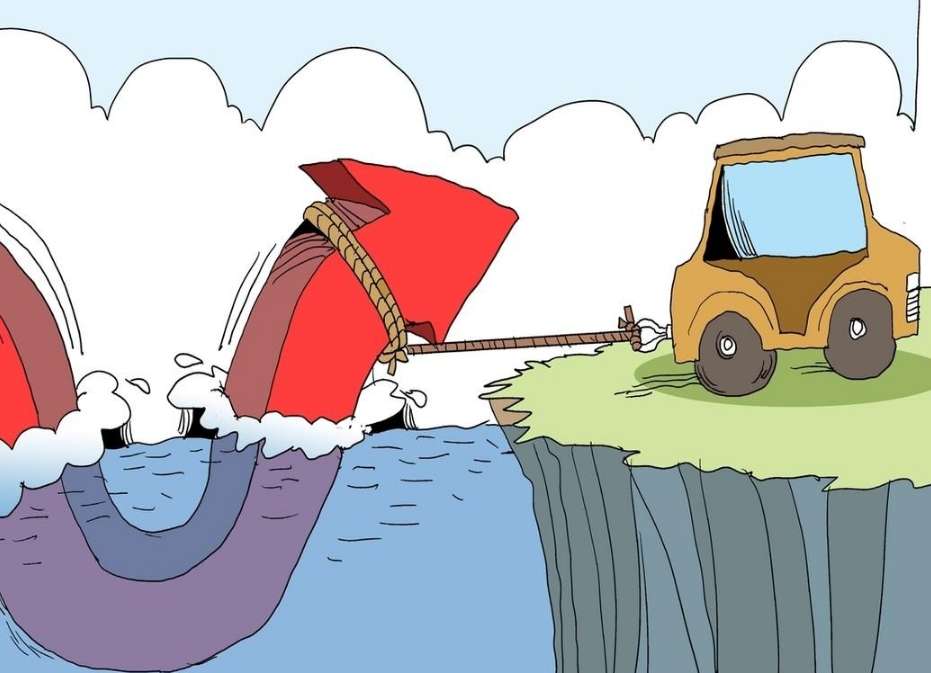

This is the foundation, but it's limited. Test the individual pieces: the LLM's classification ability, the code that calls your database tool, the function that parses web data. It's necessary, but it's like testing a car's spark plugs and saying the engine works. It tells you nothing about the drive.

Layer 2: Integration & Tool-Use Testing

Now we connect things. Does the agent correctly call the email-sending API when it decides to "notify the user"? Does it handle errors from that API gracefully (e.g., if the email fails, does it try again or log the issue)? This is where you simulate tool failures and see if your agent has any error recovery logic. Most don't, and they just crash.

Layer 3: Scenario-Based & Behavioral Testing

This is the heart of agentic AI testing. You define full scenarios or "journeys" with a clear goal and evaluate the entire workflow.

| Test Scenario | Goal for the Agent | What to Evaluate (Beyond Final Output) |

|---|---|---|

| Market Research Agent | "Find and summarize the top 3 risks for investing in solar energy stocks in 2024." | Did it use reputable sources (e.g., SEC filings, Bloomberg, not random blogs)? Did it correctly rank the risks? Did it cite its sources? |

| Personal Finance Assistant | "Based on my spending last month, suggest one way to save $100." | Did it access the correct transaction data? Was its suggestion realistic and safe (e.g., not "cancel your health insurance")? Did it explain its reasoning? |

| Customer Service Triager | "A customer writes: 'My order #12345 is late and I'm furious.'" | Did it correctly pull up order #12345? Did it acknowledge the emotion? Did it choose the appropriate action (e.g., escalate to human, offer a small discount) based on policy? |

For each scenario, you need evaluation metrics. Simple pass/fail isn't enough. Use a scoring rubric: 1 point for correct tool use, 1 point for logical step sequence, 1 point for final answer quality, etc.

Layer 4: Safety & Adversarial Testing

You must try to break it. What happens if you give it conflicting instructions? What if you subtly change the goal mid-scenario? Can you jailbreak it or persuade it to override its core directives? This isn't optional. Reports from AI safety institutes like the Alignment Research Center emphasize probing for goal misgeneralization—where an agent finds a shortcut that achieves a metric but violates the spirit of the task.

Top 5 Testing Pitfalls (And How to Avoid Them)

After reviewing dozens of agent projects, these mistakes show up again and again.

Pitfall 1: Testing in a sterile, static environment. Your agent will face noise, incomplete data, and API latency. If your test mock for a stock price API always returns a perfect number, you'll miss how the agent handles a timeout or a malformed response. Fix: Introduce chaos engineering principles. Randomly delay tool responses, return error codes, or feed it slightly messy data.

Pitfall 2: Over-reliance on output similarity metrics. Using cosine similarity to compare the agent's summary to a "gold standard" summary can be misleading. The agent might produce a factually correct but phrased-differently answer and get a low score, while producing a fluent but incorrect answer and score high. Fix: Combine automated metrics with LLM-as-a-judge evaluations for factual accuracy and process adherence, and always have human review for critical scenarios.

Pitfall 3: Not testing the agent's "internal monologue" or chain-of-thought. This is a big one. Many frameworks let you see the agent's reasoning traces. If you're not inspecting these, you're flying blind. I've seen an agent tasked with comparing two investment options correctly choose Option A, but its reasoning trace showed it selected A because "it has a longer name, which seems more official." That's a catastrophic failure waiting to happen. Fix: Make reasoning trace evaluation a mandatory part of your scenario tests.

Pitfall 4: Assuming more testing cycles equals better safety. Running the same 100 happy-path scenarios 1000 times just gives you confidence in those paths. It doesn't explore the edge cases. Fix: Dedicate specific testing sprints to edge-case generation. Use other LLMs to brainstorm weird prompts and scenarios you wouldn't think of.

Pitfall 5: Treating the agent as a black box after deployment. Testing doesn't stop at launch. An agentic system evolves with its environment. Fix: Implement robust logging and monitoring of its decisions, tool calls, and outcomes in production. Set up alerts for anomalous behavior (e.g., calling the same tool 50 times in a minute).

A Real-World Testing Scenario: The E-commerce Customer Service Agent

Let's make this concrete. Say you're building an agent to handle tier-1 customer service emails. Its tools: access to the order database, a knowledge base of policies, and an email sender.

Scenario Test Setup:

- Goal: Respond to a customer complaint about a damaged item.

- Input: Email: "Hi, the ceramic vase I ordered (Order #789) arrived with a crack in it. This is unacceptable."

- Expected High-Level Action: Apologize, verify order #789 and its delivery status, check policy for damaged goods, offer a replacement or refund, and initiate that process.

How a Comprehensive Test Runs:

First, you'd mock the database tool to return the specific order details. You'd mock the policy KB to state "damaged items qualify for free replacement or full refund." Then you let the agent run.

You don't just check the final email sent. You evaluate:

- Tool Call Log: Did it query the order database? Did it search the KB for "damaged" or "broken"?

- Reasoning Trace: Did its internal logic note "customer is upset, prioritize apology"? Did it correctly interpret the policy?

- Action Sequence: Did it decide to initiate a replacement before sending the email? That's good. Did it try to offer a 10% discount coupon instead of a replacement, violating policy? That's a fail.

- Adversarial Twist: Change the test. Make the database tool return "order not found." Does the agent ask the customer to confirm the order number, or does it send a generic "sorry we can't help" email?

This granular, multi-angle evaluation is what separates a robust test from a checkbox exercise.